Description W3 Total Cache improves the SEO and user experience of your site by increasing website performance, reducing download times via features like content delivery network (CDN) integration. The only web host agnostic WordPress Performance Optimization (WPO) framework recommended by countless web developers and web hosts. Trusted by numerous companies like: AT&T, stevesouders.com, mattcutts.com, mashable.com, smashingmagazine.com, makeuseof.com, kiss925.com, pearsonified.com, lockergnome.com, johnchow.com, ilovetypography.com, webdesignerdepot.com, css-tricks.com and tens of thousands of others. Installation. Deactivate and uninstall any other caching plugin you may be using. Pay special attention if you have customized the rewrite rules for fancy permalinks, have previously installed a caching plugin or have any browser caching rules as W3TC will automate management of all best practices. Also make sure wp-content/ and wp-content/uploads/ (temporarily) have 777 permissions before proceeding, e.g.

In the terminal: # chmod 777 /var/www/vhosts/domain.com/httpdocs/wp-content/ using your web hosting control panel or your FTP / SSH account. Login as an administrator to your WordPress Admin account. Using the “Add New” menu option under the “Plugins” section of the navigation, you can either search for: w3 total cache or if you’ve downloaded the plugin already, click the “Upload” link, find the.zip file you download and then click “Install Now”. Or you can unzip and FTP upload the plugin to your plugins directory (wp-content/plugins/).

In either case, when done wp-content/plugins/w3-total-cache/ should exist. Locate and activate the plugin on the “Plugins” page. Page caching will automatically be running in basic mode. Set the permissions of wp-content and wp-content/uploads back to 755, e.g. In the terminal: # chmod 755 /var/www/vhosts/domain.com/httpdocs/wp-content/. Now click the “Settings” link to proceed to the “General Settings” tab; in most cases, “disk enhanced” mode for page cache is a “good” starting point.

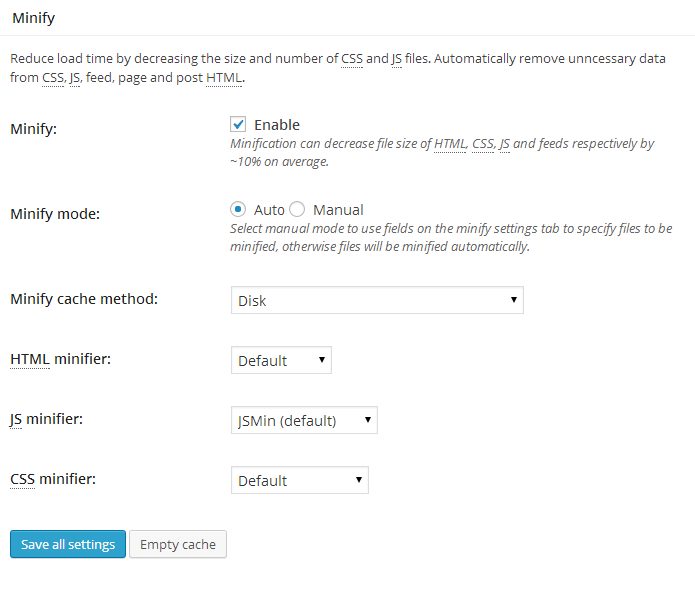

The “Compatibility mode” option found in the advanced section of the “Page Cache Settings” tab will enable functionality that optimizes the interoperablity of caching with WordPress, is disabled by default, but highly recommended. Years of testing in hundreds of thousands of installations have helped us learn how to make caching behave well with WordPress. The tradeoff is that disk enhanced page cache performance under load tests will be decreased by 20% at scale. Recommended: On the “Minify Settings” tab, all of the recommended settings are preset.

If auto mode causes issues with your web site’s layout, switch to manual mode and use the help button to simplify discovery of your CSS and JS files and groups. Pay close attention to the method and location of your JS group embeddings. See the plugin’s FAQ for more information on usage. Recommended: On the “Browser Cache” tab, HTTP compression is enabled by default. Make sure to enable other options to suit your goals. Recommended: If you already have a content delivery network (CDN) provider, proceed to the “Content Delivery Network” tab and populate the fields and set your preferences.

If you do not use the Media Library, you will need to import your images etc into the default locations. Use the Media Library Import Tool on the “Content Delivery Network” tab to perform this task. If you do not have a CDN provider, you can still improve your site’s performance using the “Self-hosted” method. On your own server, create a subdomain and matching DNS Zone record; e.g. Static.domain.com and configure FTP options on the “Content Delivery Network” tab accordingly. Be sure to FTP upload the appropriate files, using the available upload buttons. Optional: On the “Database Cache” tab, the recommended settings are preset.

If using a shared hosting account use the “disk” method with caution, the response time of the disk may not be fast enough, so this option is disabled by default. Try object caching instead for shared hosting. Optional: On the “Object Cache” tab, all of the recommended settings are preset. If using a shared hosting account use the “disk” method with caution, the response time of the disk may not be fast enough, so this option is disabled by default. Test this option with and without database cache to ensure that it provides a performance increase. Optional: On the “User Agent Groups” tab, specify any user agents, like mobile phones if a mobile theme is used. FAQ Installation Instructions.

Deactivate and uninstall any other caching plugin you may be using. Pay special attention if you have customized the rewrite rules for fancy permalinks, have previously installed a caching plugin or have any browser caching rules as W3TC will automate management of all best practices.

Also make sure wp-content/ and wp-content/uploads/ (temporarily) have 777 permissions before proceeding, e.g. In the terminal: # chmod 777 /var/www/vhosts/domain.com/httpdocs/wp-content/ using your web hosting control panel or your FTP / SSH account. Login as an administrator to your WordPress Admin account. Using the “Add New” menu option under the “Plugins” section of the navigation, you can either search for: w3 total cache or if you’ve downloaded the plugin already, click the “Upload” link, find the.zip file you download and then click “Install Now”. Or you can unzip and FTP upload the plugin to your plugins directory (wp-content/plugins/).

In either case, when done wp-content/plugins/w3-total-cache/ should exist. Locate and activate the plugin on the “Plugins” page. Page caching will automatically be running in basic mode. Set the permissions of wp-content and wp-content/uploads back to 755, e.g. In the terminal: # chmod 755 /var/www/vhosts/domain.com/httpdocs/wp-content/.

Now click the “Settings” link to proceed to the “General Settings” tab; in most cases, “disk enhanced” mode for page cache is a “good” starting point. The “Compatibility mode” option found in the advanced section of the “Page Cache Settings” tab will enable functionality that optimizes the interoperablity of caching with WordPress, is disabled by default, but highly recommended. Years of testing in hundreds of thousands of installations have helped us learn how to make caching behave well with WordPress. The tradeoff is that disk enhanced page cache performance under load tests will be decreased by 20% at scale. Recommended: On the “Minify Settings” tab, all of the recommended settings are preset. If auto mode causes issues with your web site’s layout, switch to manual mode and use the help button to simplify discovery of your CSS and JS files and groups. Pay close attention to the method and location of your JS group embeddings.

See the plugin’s FAQ for more information on usage. Recommended: On the “Browser Cache” tab, HTTP compression is enabled by default.

Make sure to enable other options to suit your goals. Recommended: If you already have a content delivery network (CDN) provider, proceed to the “Content Delivery Network” tab and populate the fields and set your preferences. If you do not use the Media Library, you will need to import your images etc into the default locations.

Use the Media Library Import Tool on the “Content Delivery Network” tab to perform this task. Politics. If you do not have a CDN provider, you can still improve your site’s performance using the “Self-hosted” method. On your own server, create a subdomain and matching DNS Zone record; e.g. Static.domain.com and configure FTP options on the “Content Delivery Network” tab accordingly. Be sure to FTP upload the appropriate files, using the available upload buttons. Optional: On the “Database Cache” tab, the recommended settings are preset.

If using a shared hosting account use the “disk” method with caution, the response time of the disk may not be fast enough, so this option is disabled by default. Try object caching instead for shared hosting. Optional: On the “Object Cache” tab, all of the recommended settings are preset. If using a shared hosting account use the “disk” method with caution, the response time of the disk may not be fast enough, so this option is disabled by default.

Test this option with and without database cache to ensure that it provides a performance increase. Optional: On the “User Agent Groups” tab, specify any user agents, like mobile phones if a mobile theme is used. Why does speed matter?

Search engines like Google, measure and factor in the speed of web sites in their ranking algorithm. When they recommend a site they want to make sure users find what they’re looking for quickly. So in effect you and Google should have the same objective. Speed is among the most significant success factors web sites face. In fact, your site’s speed directly affects your income (revenue) — it’s a fact. Some high traffic sites conducted research and uncovered the following:. Google.com: +500 ms (speed decrease) - -20% traffic loss.

Yahoo.com: +400 ms (speed decrease) - -5-9% full-page traffic loss (visitor left before the page finished loading). Amazon.com: +100 ms (speed decrease) - -1% sales loss A thousandth of a second is not a long time, yet the impact is quite significant. Even if you’re not a large company (or just hope to become one), a loss is still a loss. However, there is a solution to this problem, take advantage. Many of the other consequences of poor performance were discovered more than a decade ago:. Lower perceived credibility (Fogg et al.

2001). Lower perceived quality (Bouch, Kuchinsky, and Bhatti 2000).

Increased user frustration (Ceaparu et al. 2004).

Increased blood pressure (Scheirer et al. 2002). Reduced flow rates (Novak, Hoffman, and Yung 200). Reduced conversion rates (Akamai 2007). Increased exit rates (Nielsen 2000). Are perceived as less interesting (Ramsay, Barbesi, and Preece 1998). Are perceived as less attractive (Skadberg and Kimmel 2004) There are a number of that have been documenting the role of performance in success on the web, W3 Total Cache exists to give you a framework to tune your application or site without having to do years of research.

Why is W3 Total Cache better than other caching solutions? It’s a complete framework. Most cache plugins available do a great job at achieving a couple of performance aims.

Our plugin remedies numerous performance reducing aspects of any web site going far beyond merely reducing CPU usage (load) and bandwidth consumption for HTML pages alone. Equally important, the plugin requires no theme modifications, modifications to your.htaccess (modrewrite rules) or programming compromises to get started. Most importantly, it’s the only plugin designed to optimize all practical hosting environments small or large. The options are many and setup is easy. I’ve never heard of any of this stuff; my site is fine, no one complains about the speed. Why should I install this?

Rarely do readers take the time to complain. They typically just stop browsing earlier than you’d prefer and may not return altogether.

This is the only plugin specifically designed to make sure that all aspects of your site are as fast as possible. Google is placing more emphasis on the; this plugin helps with that too. It’s in every web site owner’s best interest is to make sure that the performance of your site is not hindering its success. Which WordPress versions are supported?

To use all features in the suite, a minimum of version WordPress 2.8 with PHP 5.3 is required. Earlier versions will benefit from our Media Library Importer to get them back on the upgrade path and into a CDN of their choosing. Why doesn’t minify work for me? Great question.

W3 Total Cache uses several open source tools to attempt to combine and optimize CSS, JavaScript and HTML etc. Unfortunately some trial and error is required on the part of developers is required to make sure that their code can be successfully minified with the various libraries W3 Total Cache supports. Even still, if developers do test their code thoroughly, they cannot be sure that interoperability with other code your site may have. This fault does not lie with any single party here, because there are thousands of plugins and theme combinations that a given site can have, there are millions of possible combinations of CSS, JavaScript etc. A good rule of thumb is to try auto mode, work with a developer to identify the code that is not compatible and start with combine only mode (the safest optimization) and increase the optimization to the point just before functionality (JavaScript) or user interface / layout (CSS) breaks in your site. We’re always working to make this more simple and straight forward in future releases, but this is not an undertaking we can realize on our own. When you find a plugin, theme or file that is not compatible with minification reach out to the developer and ask them either to provide a minified version with their distribution or otherwise make sure their code is minification-friendly.

Who do you recommend as a CDN (Content Delivery Network) provider? That depends on how you use your site and where most of your readers read your site (regionally).

Here’s a short list:.,. What about comments? Does the plugin slow down the rate at which comments appear? On the contrary, as with any other action a user can perform on a site, faster performance will encourage more of it. The cache is so quickly rebuilt in memory that it’s no trouble to show visitors the most current version of a post that’s experiencing Digg, Slashdot, Drudge Report, Yahoo Buzz or Twitter effect. Will the plugin interfere with other plugins or widgets?

No, on the contrary if you use the minify settings you will improve their performance by several times. Does this plugin work with WordPress in network mode? Indeed it does. Does this plugin work with BuddyPress (bbPress)? Will this plugin speed up WP Admin? Yes, indirectly – if you have a lot of bloggers working with you, you will find that it feels like you have a server dedicated only to WP Admin once this plugin is enabled; the result, increased productivity.

Which web servers do you support? We are aware of no incompatibilities with 1.3+, 0.7+, 5+ or 4.0.2+. If there’s a web server you feel we should be actively testing (e.g. Is this plugin server cluster and load balancer friendly? Yes, built from the ground up with scale and current hosting paradigms in mind. What is the purpose of the “Media Library Import” tool and how do I use it?

The media library import tool is for old or “messy” WordPress installations that have attachments (images etc in posts or pages) scattered about the web server or “hot linked” to 3rd party sites instead of properly using the media library. The tool will scan your posts and pages for the cases above and copy them to your media library, update your posts to use the link addresses and produce a.htaccess file containing the list of of permanent redirects, so search engines can find the files in their new location. You should backup your database before performing this operation. How do I find the JS and CSS to optimize (minify) them with this plugin?

Use the “Help” button available on the Minify settings tab. Once open, the tool will look for and populate the CSS and JS files used in each template of the site for the active theme. To then add a file to the minify settings, click the checkbox next to that file. The embed location of JS files can also be specified to improve page render performance. Minify settings for all installed themes can be managed from the tool as well by selecting the theme from the drop down menu. Once done configuring minify settings, click the apply and close button, then save settings in the Minify settings tab. I don’t understand what a CDN has to do with caching, that’s completely different, no?

Technically no, a CDN is a high performance cache that stores static assets (your theme files, media library etc) in various locations throughout the world in order to provide low latency access to them by readers in those regions. How do I use an Origin Pull (Mirror) CDN? Login to your CDN providers control panel or account management area. Following any set up steps they provide, create a new “pull zone” or “bucket” for your site’s domain name. If there’s a set up wizard or any troubleshooting tips your provider offers, be sure to review them.

In the CDN tab of the plugin, enter the hostname your CDN provider provided in the “replace site’s hostname with” field. You should always do a quick check by opening a test file from the CDN hostname, e.g.

Troubleshoot with your CDN provider until this test is successful. Now go to the General tab and click the checkbox and save the settings to enable CDN functionality and empty the cache for the changes to take effect.

How do I configure Amazon Simple Storage Service (Amazon S3) or Amazon CloudFront as my CDN? First (unless using origin pull); it may take several hours for your account credentials to be functional. Next, you need to obtain your “Access key ID” and “Secret key” from the “Access Credentials” section of the “” page of “My Account.” Make sure the status is “active.” Next, make sure that “Amazon Simple Storage Service (Amazon S3)” is the selected “CDN type” on the “General Settings” tab, then save the changes. Now on the “Content Delivery Network Settings” tab enter your “Access key,” “Secret key” and enter a name (avoid special characters and spaces) for your bucket in the “Create a bucket” field by clicking the button of the same name.

If using an existing bucket simply specify the bucket name in the “Bucket” field. Click the “Test S3 Upload” button and make sure that the test is successful, if not check your settings and try again.

Save your settings. Unless you wish to use CloudFront, you’re almost done, skip to the next paragraph if you’re using CloudFront. Go to the “General Settings” tab and click the “Enable” checkbox and save the settings to enable CDN functionality. Empty the cache for the changes to take effect. If preview mode is active you will need to “deploy” your changes for them to take effect. To use CloudFront, perform all of the steps above, except select the “Amazon CloudFront” “CDN type” in the “Content Delivery Network” section of the “General Settings” tab.

When creating a new bucket, the distribution ID will automatically be populated. Otherwise, proceed to the and create a new distribution: select the S3 Bucket you created earlier as the “Origin,” enter a if you wish to add one or more to your DNS Zone.

Make sure that “Distribution Status” is enabled and “State” is deployed. Now on “Content Delivery Network” tab of the plugin, copy the subdomain found in the AWS Management Console and enter the CNAME used for the distribution in the “CNAME” field.

You may optionally, specify up to 10 hostnames to use rather than the default hostname, doing so will improve the render performance of your site’s pages. Additional hostnames should also be specified in the settings for the distribution you’re using in the AWS Management Console. Now go to the General tab and click the “Enable” checkbox and save the settings to enable CDN functionality and empty the cache for the changes to take effect. If preview mode is active you will need to “deploy” your changes for them to take effect. How do I configure Rackspace Cloud Files as my CDN?

Next, in the “Content Delivery Network” section of the “General Settings” tab, select Rackspace Cloud Files as the “CDN Type.” Now, in the “Configuration” section of the “Content Delivery Network” tab, enter the “Username” and “API key” associated with your account (found in the API Access section of the ) in the respective fields. Next enter a name for the container to use (avoid special characters and spaces). If the operation is successful, the container’s ID will automatically appear in the “Replace site’s hostname with” field.

You may optionally, specify the container name and container ID of an if you wish. Click the “Test Cloud Files Upload” button and make sure that the test is successful, if not check your settings and try again. Save your settings. You’re now ready to export your media library, theme and any other files to the CDN. You may optionally, specify up to 10 hostnames to use rather than the default hostname, doing so will improve the render performance of your site’s pages. Now go to the General tab and click the “Enable” checkbox and save the settings to enable CDN functionality and empty the cache for the changes to take effect. If preview mode is active you will need to “deploy” your changes for them to take effect.

What is the purpose of the “modify attachment URLs” button? If the domain name of your site has changed, this tool is useful in updating your posts and pages to use the current addresses. For example, if your site used to be www.domain.com, and you decided to change it to domain.com, the result would either be many “broken” images or many unnecessary redirects (which slow down the visitor’s browsing experience). You can use this tool to correct this and similar cases. Correcting the URLs of your images also allows the plugin to do a better job of determining which images are actually hosted with the CDN. As always, it never hurts to back up your database first.

Is this plugin comptatible with TDO Mini Forms? Captcha and recaptcha will work fine, however you will need to prevent any pages with forms from being cached. Add the page’s URI to the “Never cache the following pages” box on the Page Cache Settings tab. Is this plugin comptatible with GD Star Rating? Follow these steps:.

Enable dynamic loading of ratings by checking GD Star Rating - Settings - Features “Cache support option”. If Database cache enabled in W3 Total Cache add wpgdsr to “Ignored query stems” field in the Database Cache settings tab, otherwise ratings will not updated after voting.

Empty all caches I see garbage characters instead of the normal web site, what’s going on here? If a theme or it’s files use the call phpflush or function flush that will interfere with the plugins normal operation; making the plugin send cached files before essential operations have finished. The flush call is no longer necessary and should be removed. How do I cache only the home page? Add /.+ to page cache “Never cache the following pages” option on the page cache settings tab. I’m getting blank pages or 500 error codes when trying to upgrade on WordPress in network mode First, make sure the plugin is not active (disabled) network-wide.

Then make sure it’s deactivated network-wide. Now you should be able to successful upgrade without breaking your site. A notification about file owner appears along with an FTP form, how can I resolve this? The plugin uses WordPress FileSystem functionality to write to files. It checks if the file owner, file owner group of created files match process owner. If this is not the case it cannot write or modify files. Typically, you should tell your web host about the permission issue and they should be able to resolve it.

You can however try adding define(‘FSMETHOD’, ‘direct’); to wp-config.php to circumvent the file and folder checks. This is too good to be true, how can I test the results? You will be able to see it instantly on each page load, but for tangible metrics, consider the following tools:. I don’t have time to deal with this, but I know I need it. Will you help me? Please and we’ll get you acclimated so you can “set it and forget it.” Install the plugin to read the full FAQ on the plugins FAQ tab. Hello, I could give 0 star rating but kindness of WordPress, they don’t allow so.

П™‚ Please refer to below threads. It has been many months, there is no reply from the author. Such kind of disappointing experience and dishonor of my time for reporting below issues. and, also see. Another thing, it would be great if respected author consider do not use aggressive nagging, pop-up, pre-activation of third-party related extentions such as New Relic, Swarmify, Fragment Cache, as well at pop-up for subscribing own newsletter, you shouldn’t keep checkbox checked by default. Also, it would be great if plugin author thinks in minimal approach, to provide less options. Thanks & Regards, Gulshan.

I'm filling a VB.Net DataTable from a csv file with the following code. The date values are being read as integers since they exist in csv file in this format (70514).

This represents 5/14/07. I need to format these as Dates in my VB.Net DataTable but I get an error if I attempt to do after the DataTable has been filled, something about data exists in the table so this is not allowed. I then tried to do this before the csv file fills the DataTable by creating the columns before filling, it doesn't fill my columns but adds the 'same' columns so I have 36 columns instead of 18. SConnectionString = 'Provider=Microsoft.Jet.OL EDB.4.0;Da ta Source=c: JB CUSTDATA Tri Part Screw CSV;Extended Properties=Text;' Dim jbConn As New OleDbConnection(sConnectio nString) jbConn.Open Dim cmdSelect As New OleDbCommand('Select. from ESCHED.csv', jbConn) Dim Adapter As New OleDbDataAdapter Adapter.SelectCommand = cmdSelect Adapter.Fill(tblDeliveries ) I would appreciate your ideas on how to get these columns as type DateTime.

Thank You, JMO9966. I think you're going to have to handle it like this. First, fill your datatable. Then add a column for each of your dates, making its datatype datetime. Then cycle through all the rows in the filled table and, from the integer representation that the dataadapter loads, calculate the date and put it in the added date column.

The code would be on the lines of Dim dc As New DataColumn('myDate', GetType(Date)) tblDeliveries.Columns.Add( dc) For Each dr As DataRow in tblDeliveries.Rows Dim DateString As String = dr(dateIntegerColumn).ToSt ring Dim thisYear As Integer = CInt('200' & DateString.Substring(0,1)) dim thisMonth As Integer = Cint(DateString.SubString( 1,2)) Dim thisDay As Integer = CInt(DateString.SubString( 3,2)) dt('myDate') = new DateTime(thisYear,thisMont h,thisDay) Next You might be able to use a more elegant conversion function than that, but I don't see a way of avoiding doing it value by value. Thanks guys, I'm testing Sancler's method since it's less code and I'm not familiar with LearnedOne's method. I get this error though: Index and length must refer to a location within the string. Parameter name: length For Each dr As DataRow In tblDeliveries.Rows 'For rows = 0 To tblDeliveries.Rows.Count - 1 'Dim dr As DataRow In tblDeliveries.Rows Dim DateString As String = dr(2).ToString Dim thisYear As Integer = CInt('200' & DateString.Substring(0, 1)) Dim thisMonth As Integer = CInt(DateString.Substring( 1, 2)) Dim thisDay As Integer = CInt(DateString.Substring( 3, 2)) 'dt('Date1') = New DateTime(thisYear, thisMonth, thisDay) dr('Date1') = New DateTime(thisYear, thisMonth, thisDay) Next.

A.1.1 Setting Parameters in 793UPGPARAMS.txt Follow this procedure to set parameters in the 793UPGPARAMS.txt file. This procedure is applicable for upgrades to Oracle BI Applications release 7.9.3. To set parameters in the 793UPGPARAMS.txt file. Navigate to the folder OracleBI dwrep Upgrade Informatica ParameterFiles and copy the file 793UPGPARAMS.txt into the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles. Set the parameter $$ETLPROCWID to the latest ETLPROCWID value from the database. You can get this value from WPARAMG.ETLPROCWID.

Set the parameter $$DATASOURCENUMID to the relevant value from the source system setup. Search for parameter values defined with the 'TODATE' function. Edit the function to use the appropriate function for data conversion based on the database type: - For Oracle databases, use 'TODATE('1899-01-01 00:00:00', 'YYYY-MM-DD HH24:MI:SS')' - For SQL Server databases, use 'CONVERT(datetime,'1899-01-01')' - For DB2 databases, use 'TODATE('1899-01-01 00:00:00', 'YYYY-MM-DD HH24:MI:SS')' - For Teradata databases, use 'cast('1899-01-01' as timestamp format 'YYYY-MM-DD')'. A.1.2 Setting Parameters in 794UPGPARAMS.txt Follow this procedure to set parameters in the 794UPGPARAMS.txt file. This procedure is applicable for upgrades to Oracle BI Applications release 7.9.4. To set parameters in the 794UPGPARAMS.txt file. Navigate to the folder OracleBI dwrep Upgrade Informatica ParameterFiles and copy the file 794UPGPARAMS.txt into the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles.

Set the parameter $$ETLPROCWID to the latest ETLPROCWID value from the database. You can get this value from WPARAMG.ETLPROCWID. Set the parameter $$DATASOURCENUMID to the relevant value from the source system setup.

Search for parameter values defined with the 'TODATE' function. Edit the function to use the appropriate function for data conversion based on the database type: - For Oracle databases, use 'TODATE('1899-01-01 00:00:00', 'YYYY-MM-DD HH24:MI:SS')' - For SQL Server databases, use 'CONVERT(datetime,'1899-01-01')' - For DB2 databases, use 'TODATE('1899-01-01 00:00:00', 'YYYY-MM-DD HH24:MI:SS')' - For Teradata databases, use 'cast('1899-01-01' as timestamp format 'YYYY-MM-DD')'. A.1.3 Setting Parameters in 795UPGPARAMS.txt Follow this procedure to set parameters in the 795UPGPARAMS.txt file. This procedure is applicable for upgrades to Oracle BI Applications release 7.9.5. To set parameters in the 795UPGPARAMS.txt file. Navigate to the folder OracleBI dwrep Upgrade Informatica ParameterFiles and copy the file 795UPGPARAMS.txt into the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles. Set the parameter $$ETLPROCWID to the latest ETLPROCWID value from the database.

You can get this value from WPARAMG.ETLPROCWID. Set the parameter $$DATASOURCENUMID to the relevant value from the source system setup. Search for parameter values defined with the 'TODATE' function. Edit the function to use the appropriate function for data conversion based on the database type: - For Oracle databases, use 'TODATE('1899-01-01 00:00:00', 'YYYY-MM-DD HH24:MI:SS')' - For SQL Server databases, use 'CONVERT(datetime,'1899-01-01')' - For DB2 databases, use 'TODATE('1899-01-01 00:00:00', 'YYYY-MM-DD HH24:MI:SS')' - For Teradata databases, use 'cast('1899-01-01' as timestamp format 'YYYY-MM-DD')'. A.1.4 Setting Parameters in 7951UPGPARAMS.txt Follow this procedure to set parameters in the 7951UPGPARAMS.txt file. This procedure is applicable for upgrades to Oracle BI Applications release 7.9.5.1.

To set parameters in the 7951UPGPARAMS.txt file. Navigate to the folder OracleBI dwrep Upgrade Informatica ParameterFiles and copy the file 7951UPGPARAMS.txt into the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles. Set the parameter $$ETLPROCWID to the latest ETLPROCWID value from the database.

You can get this value from WPARAMG.ETLPROCWID. Set the parameter $$DATASOURCENUMID to the relevant value from the source system setup. Search for parameter values defined with the 'TODATE' function. Edit the function to use the appropriate function for data conversion based on the database type: - For Oracle databases, use 'TODATE('1899-01-01 00:00:00', 'YYYY-MM-DD HH24:MI:SS')' - For SQL Server databases, use 'CONVERT(datetime,'1899-01-01')' - For DB2 databases, use 'TODATE('1899-01-01 00:00:00', 'YYYY-MM-DD HH24:MI:SS')' - For Teradata databases, use 'cast('1899-01-01' as timestamp format 'YYYY-MM-DD')'. A.1.5 Setting Parameters in 796UPGPARAMS.txt Follow this procedure to set parameters in the 796UPGPARAMS.txt file. This procedure is applicable for upgrades to Oracle BI Applications release 7.9.6.

To set parameters in the 796UPGPARAMS.txt file. Navigate to the folder OracleBI dwrep Upgrade Informatica ParameterFiles and copy the file 796UPGPARAMS.txt into the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles. In the 796UPGPARAMS.txt file, set the following parameters:. $$ETLPROCWID. Set this parameter to the relevant value from the source system setup. You can get this value from WPARAMG.ETLPROCWID. $$DATASOURCENUMID.

Set this parameter to the relevant value from the source system setup. $$INITIALEXTRACTDATE. Set this parameter to the initial extraction data of the data warehouse. $$WHDATASOURCENUMID. Set this parameter to the data source number ID you have set up for the data warehouse.

This value should be the same data source number ID in the warehouse table WDAYD. If this is not set up correctly, the upgrade maps will fail with a NULL ROWWID. Make sure this parameter is setup appropriately for your environment.

$$STARTDATE. Get this value from the Source System Parameters tab in DAC. $$ENDDATE. Get this value from the Source System Parameters tab in DAC. $$MASTERORG.

Get this value from the Source System Parameters tab in DAC. $$INVPRODCATSETID1. Get this value from the Source System Parameters tab in DAC. $$PRODCATSETID1. Get this value from the Source System Parameters tab in DAC. Set the parameter $$ISSOURCEPRE80 to Y if your source OLTP application was on a version prior to Siebel 8.0 before you began the upgrade process.

Otherwise, set this parameter to N. Search for parameter values defined with the 'TODATE' function. Edit the function to use the appropriate function for data conversion based on the database type: - For Oracle databases, use 'TODATE('1899-01-01 00:00:00', 'YYYY-MM-DD HH24:MI:SS')' - For SQL Server databases, use 'CONVERT(datetime,'1899-01-01')' - For DB2 databases, use 'TODATE('1899-01-01 00:00:00', 'YYYY-MM-DD HH24:MI:SS')' - For Teradata databases, use 'cast('1899-01-01' as timestamp format 'YYYY-MM-DD')'. A.1.6 Setting Parameters in 7961UPGPARAMS.txt Follow this procedure to set parameters in the 7961UPGPARAMS.txt file.

This procedure is applicable for upgrades to Oracle BI Applications release 7.9.6.1. To set parameters in the 7961UPGPARAMS.txt file. Navigate to the folder OracleBI dwrep Upgrade Informatica ParameterFiles and copy the file 7961UPGPARAMS.txt into the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles. In the 7961UPGPARAMS.txt file, set the following parameters:. $$ETLPROCWID. Set this parameter to the relevant value from the source system setup.

You can get this value from WPARAMG.ETLPROCWID. $$DATASOURCENUMID. Set this parameter to the relevant value from the source system setup. $$INITIALEXTRACTDATE. Set this parameter to the initial extraction data of the data warehouse. $$WHDATASOURCENUMID. Set this parameter to the data source number ID you have set up for the data warehouse.

Search for parameter values defined with the 'TODATE' function. Edit the function to use the appropriate function for data conversion based on the database type: - For Oracle databases, use 'TODATE('1899-01-01 00:00:00', 'YYYY-MM-DD HH24:MI:SS')' - For SQL Server databases, use 'CONVERT(datetime,'1899-01-01')' - For DB2 databases, use 'TODATE('1899-01-01 00:00:00', 'YYYY-MM-DD HH24:MI:SS')' - For Teradata databases, use 'cast('1899-01-01' as timestamp format 'YYYY-MM-DD')'. A.1.7 Setting Parameters in 7962UPGPARAMS.txt Follow this procedure to set parameters in the 7962UPGPARAMS.txt file. This procedure is applicable for upgrades to Oracle BI Applications release 7.9.6.2. Note: If your source system is PeopleSoft, you must also set the parameters in To set parameters in the 7962UPGPARAMS.txt file. Navigate to the folder OracleBI dwrep Upgrade Informatica ParameterFiles and copy the file 7962UPGPARAMS.txt into the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles. In the 7962UPGPARAMS.txt file, set the following parameters:.

$$ETLPROCWID. Set this parameter to the relevant value from the source system setup. You can get this value from WPARAMG.ETLPROCWID. $$DATASOURCENUMID. Set this parameter to the relevant value from the source system setup. $$INITIALEXTRACTDATE. Set this parameter to the initial extraction data of the data warehouse.

$$WHDATASOURCENUMID. Set this parameter to the data source number ID you have set up for the data warehouse.

Search for parameter values defined with the 'TODATE' function. Edit the function to use the appropriate function for data conversion based on the database type: - For Oracle databases, use 'TODATE('1899-01-01 00:00:00', 'YYYY-MM-DD HH24:MI:SS')' - For SQL Server databases, use 'CONVERT(datetime,'1899-01-01')' - For DB2 databases, use 'TODATE('1899-01-01 00:00:00', 'YYYY-MM-DD HH24:MI:SS')' - For Teradata databases, use 'cast('1899-01-01' as timestamp format 'YYYY-MM-DD')'. A.1.8 Setting Parameters in 7963UPGPARAMS.txt Follow this procedure to set parameters in the 7963UPGPARAMS.txt file. This procedure is applicable for upgrades to Oracle BI Applications release 7.9.6.3.

To set parameters in the 7963UPGPARAMS.txt file. Navigate to the folder OracleBI dwrep Upgrade Informatica ParameterFiles and copy the file 7963UPGPARAMS.txt into the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles. In the 7963UPGPARAMS.txt file, set the following global parameters:. $$ETLPROCWID. Set this parameter to the relevant value from the source system setup. You can get this value from WPARAMG.ETLPROCWID. $$WHDATASOURCENUMID.

Set this parameter to the data source number ID you have set up for the data warehouse. In the 7963UPGPARAMS.txt file, set the following common dimension parameters:. Locate the section in the file that lists common dimension parameters for your source system, and set the following parameters. $$DATASOURCENUMID. Set this parameter to the relevant value from the source system setup. $$INITIALEXTRACTDATE. Set this parameter to the initial extraction data of the data warehouse.

$$DFLTLANG. The default value for this parameter is $$DFLTLANG=US. This value is appropriate for Oracle source systems when the transactional database language is English (US).

For Siebel and JD Edwards source systems with the transactional database language as English (US), set the value for the parameter to $$DFLTLANG=ENU. If the default language of your transactional database is not English (US), you need to set the DFLTLANG parameter to the appropriate language for your data source database. To find the value to specify, execute the following query against the transactional database. Select VAL from SSYSPREF where SYSPREFCD='ETL Default Language'; Note: PeopleSoft source systems do not use the $$DFLTLANG parameter. A.2 Setting Source System-Specific Parameters in Informatica Parameter Files This section provides instructions for setting parameters in the Informatica parameter files that are specific to various source systems. You may need to set or update these parameters depending on your environment. The topic headings below indicate the source system and version of the parameter file that require configuration.

This section includes the following topics:. Note: If you are using Oracle Financial Analytics and your source system is either PeopleSoft or Oracle EBS 11.5.10 family pack OIE.I and OIE.J, there are additional parameters you must set. For instructions, see. A.2.1 Setting Parameters in 796UPGPARAMS.txt for Oracle EBS 11i Source Systems This procedure is only applicable to Oracle EBS 11i source systems and for upgrades to Oracle BI Applications release 7.9.6.

To set parameters in the 796UPGPARAMS.txt file for Oracle EBS 11i source systems. Navigate to the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles.

Open the file 796UPGPARAMS.txt. Note the values for the following parameters:. $$ORADATASOURCENUMIDLIST.

$$GRAIN. $$GBLDATASOURCENUMID. $$QUALIFICATIONCATEGORYLIST. In DAC, go to the Design view, and select the appropriate custom container. Select the Source System Parameters tab. Query for the parameters listed in Step 3, and compare the values. If necessary, change the values for the parameters in the 796UPGPARAMS.txt file to match the values for the parameters in the DAC Source System Parameters tab.

Save the 796UPGPARAMS.txt file. A.2.2 Setting Parameters in 796UPGPARAMS.txt for Siebel Source Systems This procedure is only applicable to Siebel source systems and for upgrades to Oracle BI Applications release 7.9.6. To set parameters in the 796UPGPARAMS.txt file for Siebel source systems. Navigate to the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles. Open the file 796UPGPARAMS.txt. Note the values for the following parameters:.

$$HIDT. $$LOWDT.

$$NAMEORDERWITHFIRSTNAME. In DAC, go the Design view, and select the appropriate custom container. Select the Source System Parameters tab.

Query for the parameters listed in Step 3, and compare the values. If necessary, change the values for the parameters in the 796UPGPARAMS.txt file to match the values for the parameters in the DAC Source System Parameters tab. Save the 796UPGPARAMS.txt file. A.2.3 Setting Parameters in 7962UPGPARAMS.txt for PeopleSoft Source Systems If your source system is PeopleSoft, you must follow the instructions in this section after completing the instructions in This procedure is applicable for upgrades to Oracle BI Applications release 7.9.6.2.

To set parameters in the 7962UPGPARAMS.txt file for PeopleSoft source systems. Open the 7962UPGPARAMS.txt file.

Note: Make sure you perform the procedure in before you begin this procedure. If you are using PeopleSoft version 8.9 or higher, set the following parameters in the 7962UPGPARAMS.txt file specific to multiple product category enhancement:. For the $$TREENAME1 parameter, set the value to a tree name for which you want to include the product categories. Generally, this value is ALLPURCHASEITEMS.

You can use the following SQL to obtain the TREENAMES: SELECT. FROM PSTREEDEFN WHERE TREESTRCTID = 'ITEMS'. The TREESTRUCT in the PeopleSoft Tree that defines the Item Category Tree generally has the value ITEMS. If you changed this value, you must update the parameter $$TREESTRUCTIDLIST with the appropriate value.

Verify the value for the PSFTOLTPVER parameter matches the version of PeopleSoft you are using. A.2.4 Setting Parameters in 7963UPGPARAMS.txt for Siebel Industry Applications Source Systems This procedure is applicable when you are upgrading to Oracle BI Applications release 7.9.6.3 and the source system is Siebel Industry Applications. To set parameters in the 7963UPGPARAMS.txt file for Siebel Industry Applications source systems. Navigate to the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles. Open the file 7963UPGPARAMS.txt. Search for the parameter $$VERTICALUPGRADE.

If you are using Siebel Industry Applications, set the value to 1. For example: $$VERTICALUPGRADE=1 If you are not using Siebel Industry Applications, leave the default value (0).

Save the 7963UPGPARAMS.txt file. A.3.1.1 Setting Parameters for Value Set Hierarchies and FSG Hierarchies In Oracle Financial Analytics, the default behavior is for Value Set Hierarchies to be enabled and Financial Statement Generator (FSG) Hierarchies to be disabled. If you have changed this behavior by disabling Value Set Hierarchies and enabling FSG Hierarchies, then you need to set the parameters that control this behavior in the 796UPGPARAMS.txt file and the DAC configuration tags. To set the FSG Hierarchies and Value Set Hierarchies parameters. Navigate to the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles.

Open the 796UPGPARAMS.txt file. Search for the parameter $$ISFSGHIERARCHYINSTALLED, and set the value to Y. For example: $$ISFSGHIERARCHYINSTALLED=Y.

Search for the parameter $$ISVALUESETHIERARCHYINSTALLED, and set the value to N. For example: $$ISVALUESETHIERARCHYINSTALLED=N. A.3.1.2.1 Setting GL Data Extraction Parameters for Oracle EBS 11i Sources Follow this procedure to set GL data extraction parameters for Oracle EBS 11i sources. To set GL data extraction parameters.

Navigate to the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles. Open the 796UPGPARAMS.txt file. Note the values for the following parameters:. $$FILTERBYSETOFBOOKSID.

$$FILTERBYSETOFBOOKSTYPE. $$SETOFBOOKSIDLIST. $$SETOFBOOKSTYPELIST. In DAC, go to the Design view, and select the appropriate custom container.

Select the Source System Parameters tab. Query for the parameters listed in Step 3, and compare the values.

If necessary, change the values for the parameters in the 796UPGPARAMS.txt file to match the values for the parameters in the DAC Source System Parameters tab. Save the 796UPGPARAMS.txt file. A.3.1.2.2 Setting GL Data Extraction Parameters for Oracle EBS R12 Sources Follow this procedure to configure GL data extraction parameters for Oracle EBS R12 sources.

To set GL data extraction parameters. Navigate to the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles. Open the 796UPGPARAMS.txt file. Note the values for the following parameters:.

$$FILTERBYLEDGERID. $$FILTERBYLEDGERTYPE. $$LEDGERIDLIST. $$LEDGERTYPELIST. In DAC, go to the Design view, and select the appropriate custom container.

Select the Source System Parameters tab. Query for the parameters listed in Step 3, and compare the values.

If necessary, change the values for the parameters in the 796UPGPARAMS.txt file to match the values for the parameters in the DAC Source System Parameters tab. Save the 796UPGPARAMS.txt file. A.3.1.3 Setting the COGS Fact Mapping for Oracle EBS R12 For Oracle EBS R12 sources, follow this procedure to update the COGS fact mapping. To set the COGS fact mapping. Launch Informatica PowerCenter Designer.

Navigate to the folder UPGRADE7951TO796ORA12. Open the mapping SDEORAGLCOGSFactUPG796.

Open the mapplet mpltBCORAGLCOGSFact. Open the Source Qualifier Transformation, do the following:.

Open the SQL Query property. In the WHERE clause of the query locate the hard-coded filter on the Transaction Type ID and Transaction Action ID. For example: MMT.TRANSACTIONTYPEID IN (15, 33, 10008) AND (MMT.TRANSACTIONACTIONID,MTA.ACCOUNTINGLINETYPE) IN ((27, 2), (1, 36), (36, 35)). Change the values to the actual values you used in the SDEORAGLCOGSFact mapping in the base Informatica Repository code. A.3.1.4 Setting the $$Hint1 Parameter for Oracle Databases If your target data warehouse is an Oracle database server, follow this procedure to set the $$Hint1 parameter.

To set the $$Hint1 parameter. Navigate to the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles. Open the 796UPGPARAMS.txt file.

Locate the $$Hint1 parameter for the appropriate version of the Oracle database, and enter the following value: /.+ USEHASH(WGLBALANCEF, WGLACCOUNTD, WGLACCTSEGCONFIGTMP)./ For example: mpltGLBalanceAggrByAcctSegCodes.$$Hint1=/.+ USEHASH(WGLBALANCEF, WGLACCOUNTD, WGLACCTSEGCONFIGTMP)./. Save the 796UPGPARAMS.txt file. A.3.2 Setting Parameters in 796UPGPARAMS.txt for Oracle Project Analytics If you are deploying Oracle Project Analytics and you are upgrading to Oracle BI Applications release 7.9.6, follow the procedure in this section to configure the ISPROJECTSINSTALLED parameter. To set the ISPROJECTSINSTALLED parameter. Navigate to the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles.

Open the 796UPGPARAMS.txt file. Search for the parameter $$ISPROJECTSINSTALLED, and set the value to Y. For example: $$ISPROJECTSINSTALLED=Y.

Save the 796UPGPARAMS.txt file. A.3.3.1 Setting the TIMEGRAIN Parameter for Sales Order Lines Aggregate Fact and Invoice Lines Aggregate Fact Tables If you are deploying Oracle Supply Chain and Order Management Analytics, you need to configure the TIMEGRAIN parameter for the Sales Order Lines Aggregate Fact table and for the Invoice Lines Aggregate Fact table. For instructions, see the section titled, 'Process of Aggregating Oracle Supply Chain and Order Management,' in Oracle Business Intelligence Applications Configuration Guide for Informatica PowerCenter Users. A.3.4.1 Setting GL Data Extraction Parameters for Oracle EBS 11i Sources Follow this procedure to configure GL data extraction parameters for Oracle EBS 11i sources. To set GL data extraction parameters. Navigate to the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles.

Open the 7962UPGPARAMS.txt file. Note the values for the following parameters:. mpltBCORAGLBalanceFact.$$FILTERBYSETOFBOOKSID. mpltBCORAGLBalanceFact.$$FILTERBYSETOFBOOKSTYPE. mpltBCORAGLBalanceFact.$$SETOFBOOKSIDLIST. mpltBCORAGLBalanceFact.$$SETOFBOOKSTYPELIST.

In DAC, go to the Design view, and select the appropriate custom container. Select the Source System Parameters tab. Query for the parameters listed in Step 3, and compare the values. If necessary, change the values for the parameters in the 7962UPGPARAMS.txt file to match the values for the parameters in the DAC Source System Parameters tab.

Save the 7962UPGPARAMS.txt file. A.3.4.2 Setting GL Data Extraction Parameters for Oracle EBS 12 Sources Follow this procedure to configure GL data extraction parameters for Oracle EBS 12 sources.

To set GL data extraction parameters. Navigate to the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles. Open the 7962UPGPARAMS.txt file. Note the values for the following parameters:. mpltBCORAGLBalanceFact.$$FILTERBYLEDGERID. mpltBCORAGLBalanceFact.$$FILTERBYLEDGERTYPE.

mpltBCORAGLBalanceFact.$$LEDGERIDLIST. mpltBCORAGLBalanceFact.$$LEDGERTYPELIST. In DAC, go to the Design view, and select the appropriate custom container. Select the Source System Parameters tab. Query for the parameters listed in Step 3, and compare the values. If necessary, change the values for the parameters in the 7962UPGPARAMS.txt file to match the values for the parameters in the DAC Source System Parameters tab.

Save the 7962UPGPARAMS.txt file. A.3.4.3 Setting GL Data Extraction Parameters for PeopleSoft Sources Follow this procedure to configure GL data extraction parameters for PeopleSoft 8.8, 8.9, and 9.0 sources. To set GL data extraction parameters. Navigate to the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles. Open the 7962UPGPARAMS.txt file.

Note the values for the following parameters:. mpltBCPSFTGLBalanceFact.$$FISCALYEAR. $$LOWDATE.

In DAC, go to the Design view, and select the appropriate custom container. Select the Source System Parameters tab.

Query for the parameters listed in Step 3, and compare the values. If necessary, change the values for the parameters in the 7962UPGPARAMS.txt file to match the values for the parameters in the DAC Source System Parameters tab. A.3.4.4 Setting the COGS Fact Mapping for Oracle EBS R12 For Oracle EBS R12 sources, follow this procedure to update the COGS fact mapping. To set the COGS fact mapping. Launch Informatica PowerCenter Designer. Navigate to the folder UPGRADE7961to7962ORAR12.

Open the mapping SDEORAGLCOGSFactUPG7962. Open the mapplet mpltBCORAGLCOGSFact. Open the Source Qualifier Transformation, and do the following:.

Open the SQL Query property. In the WHERE clause of the query, locate the hard-coded filter on the Transaction Type ID and Transaction Action ID. For example: MMT.TRANSACTIONTYPEID IN (15, 33, 10008) AND (MMT.TRANSACTIONACTIONID,MTA.ACCOUNTINGLINETYPE) IN ((27,2),(1,36),(36,35)). Change the values to the actual values you used in the SDEORAGLCOGSFact mapping in the main Informatica Repository code. A.3.5.1 Setting Parameters for All Source Systems This section provides instructions for setting parameters in the 7963UPGPARAMS.txt file for Oracle Financial Analytics for all source systems, including Oracle EBS 11i, Oracle EBS 12, PeopleSoft, and JD Edwards. To set the soft delete parameter. Navigate to the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles.

Open the 7963UPGPARAMS.txt file. Note the value for the parameter $$ISSOFTDELETEIMPLEMENTED. The default value for this parameter is $$ISSOFTDELETEIMPLEMENTED=N. If the Soft Delete parameter is implemented for any of the Oracle Financial Analytics modules, for example, GL, AP, AR, and Profitability (COGS/Revenue), you must change the parameter value to $$ISSOFTDELETEIMPLEMENTED=Y for the appropriate module for which it is implemented.

Save the 7963UPGPARAMS.txt file. A.3.5.2 Setting Parameters Specific to PeopleSoft This section provides instructions for setting a parameter for Oracle Financial Analytics in the 7963UPGPARAMS.txt file that is specific to PeopleSoft source systems. To set the parameter specific to PeopleSoft source systems. Navigate to the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles.

Open the 7963UPGPARAMS.txt file. The parameter $$PSFTOLTPVER specifies the version of the PeopleSoft OLTP. The default value for this parameter is $$PSFTOLTPVER=90. Change the parameter value to match the appropriate version of the PeopleSoft OLTP. For example:. For PeopleSoft version 8.9, enter $$PSFTOLTPVER=89. For PeopleSoft version 9.0, enter $$PSFTOLTPVER=90.

For PeopleSoft version 9.1, enter $$PSFTOLTPVER=91. Note: This parameter is needed to run the Transaction type dimension maps, which are missing in the PeopleSoft 8.9 source system.

Therefore, setting this parameter is particularly important if you are using PeopleSoft 8.9. Save the 7963UPGPARAMS.txt file. A.3.5.3 Setting Parameters Specific to Oracle EBS 11.5.10 Family Pack OIE.I and OIE.J This section provides instructions for setting a parameter for Oracle Financial Analytics in the 7963UPGPARAMS.txt file that is specific to Oracle EBS 11.5.10 family pack OIE.I and OIE.J. To set the parameter specific to Oracle EBS 11.5.10 OIE.I or OIE.J. Navigate to the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles. Open the 7963UPGPARAMS.txt file.

The parameter $$ORAOLTPVER specifies the version of the Oracle EBS OLTP. Change the parameter value to match the appropriate version of the family pack for your Oracle EBS 11.5.10 environment. For Oracle EBS 11.5.10 family pack OIE.I, enter $$ORAOLTPVER=OIEI. For Oracle EBS 11.5.10 family pack OIE.J, enter $$ORAOLTPVER=OIEJ.

Save the 7963UPGPARAMS.txt file. A.3.6 Setting Parameters in 7963UPGPARAMS.txt for Oracle Human Resources Analytics If you are deploying Oracle Human Resources Analytics and you are upgrading to Oracle BI Applications release 7.9.6.3, follow the procedure in this section to set parameters in 7963UPGPARAM.txt. To set the parameter for Oracle Human Resources. Navigate to the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles. Open the 7963UPGPARAMS.txt file. Search for the parameter $$HRWRKFCEXTRACTDATE.

The parameter value is defined with the 'TODATE' function. Edit the function to use the appropriate function for data conversion based on the database type: - For Oracle databases, use 'TODATE('1899-01-01 00:00:00', 'YYYY-MM-DD HH24:MI:SS')' - For SQL Server databases, use 'CONVERT(datetime,'1899-01-01')' - For DB2 databases, use 'TODATE('1899-01-01 00:00:00', 'YYYY-MM-DD HH24:MI:SS')' - For Teradata databases, use 'cast('1899-01-01' as timestamp format 'YYYY-MM-DD')'. Save the 7963UPGPARAMS.txt file. A.3.7 Setting Parameters in 7963UPGPARAMS.txt for Oracle Procurement and Spend Analytics on PeopleSoft Source System Follow the procedure in this section if you are deploying Oracle Procurement and Spend Analytics on a PeopleSoft 9.0 source system. To set the parameters for Oracle Procurement and Spend Analytics. Navigate to the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles.

Open the 7963UPGPARAMS.txt file. Set the parameter $$PSFTDATASOURCENUMID to the relevant value from the source system setup.

Save the 7963UPGPARAMS.txt file. A.3.8 Setting Parameters in 7963UPGPARAMS.txt for Oracle Procurement and Spend Analytics on Oracle EBS 11i and 12 Source Systems Follow the procedure in this section if you are deploying Oracle Procurement and Spend Analytics on a Oracle EBS 11i and 12 source systems. To set the parameters for Oracle Procurement and Spend Analytics. Navigate to the SrcFiles folder on the Informatica Server machine, for example, server infashared SrcFiles.

Open the 7963UPGPARAMS.txt file. Search for the entry: UPGRADE7962to7963ORA.SILPurchaseCycleLinesFactUPG7963. Set the parameter $$ORADATASOURCENUMID to the relevant value from the source system setup. The default value for Oracle EBS 11i is $$ORADATASOURCENUMIDLIST=(4) The default value for Oracle EBS 12is $$ORADATASOURCENUMIDLIST=(9). Save the 7963UPGPARAMS.txt file.

The values are sent in the request body, in the format that the content type specifies. Usually the content type is application/x-www-form-urlencoded, so the request body uses the same format as the query string: parameter=value&also=another When you use a file upload in the form, you use the multipart/form-data encoding instead, which has a different format. It's more complicated, but you usually don't need to care what it looks like, so I won't show an example, but it can be good to know that it exists. The content is put after the HTTP headers.

The format of an HTTP POST is to have the HTTP headers, followed by a blank line, followed by the request body. The POST variables are stored as key-value pairs in the body. You can see this in the raw content of an HTTP Post, shown below: POST /path/script.cgi HTTP/1.0 From: [email protected] User-Agent: HTTPTool/1.0 Content-Type: application/x-www-form-urlencoded Content-Length: 32 home=Cosby&favorite+flavor=flies You can see this using a tool like, which you can use to watch the raw HTTP request and response payloads being sent across the wire. Short answer: in POST requests, values are sent in the 'body' of the request. With web-forms they are most likely sent with a media type of application/x-www-form-urlencoded or multipart/form-data. Programming languages or frameworks which have been designed to handle web-requests usually do 'The Right Thing™' with such requests and provide you with easy access to the readily decoded values (like $REQUEST or $POST in PHP, or cgi.FieldStorage, flask.request.form in Python). Now let's digress a bit, which may help understand the difference;) The difference between GET and POST requests are largely semantic.

They are also 'used' differently, which explains the difference in how values are passed. GET When executing a GET request, you ask the server for one, or a set of entities.

To allow the client to filter the result, it can use the so called 'query string' of the URL. The query string is the part after the? This is part of the. So, from the point of view of your application code (the part which receives the request), you will need to inspect the URI query part to gain access to these values. Note that the keys and values are part of the URI. Browsers may impose a limit on URI length.

Python Bad File Descriptor

The HTTP standard states that there is no limit. But at the time of this writing, most browsers do limit the URIs (I don't have specific values). GET requests should never be used to submit new information to the server. Especially not larger documents. That's where you should use POST or PUT. POST When executing a POST request, the client is actually submitting a new document to the remote host. So, a query string does not (semantically) make sense.

Which is why you don't have access to them in your application code. POST is a little bit more complex (and way more flexible): When receiving a POST request, you should always expect a 'payload', or, in HTTP terms: a. The message body in itself is pretty useless, as there is no standard (as far as I can tell. Maybe application/octet-stream?) format. The body format is defined by the Content-Type header. When using a HTML FORM element with method='POST', this is usually application/x-www-form-urlencoded.

Another very common type is if you use file uploads. But is could be anything, ranging from text/plain, over application/json or even a custom application/octet-stream. In any case, if a POST request is made with a Content-Type which cannot be handled by the application, it should return a. Most programming languages (and/or web-frameworks) offer a way to de/encode the message body from/to the most common types (like application/x-www-form-urlencoded, multipart/form-data or application/json). So that's easy.

Run Time Error 52 Bad File Name Or Number

Custom types require potentially a bit more work. Using a standard HTML form encoded document as example, the application should perform the following steps:.

Read the Content-Type field. If the value is not one of the supported media-types, then return a response with a 415 status code. otherwise, decode the values from the message body. Again, languages like PHP, or web-frameworks for other popular languages will probably handle this for you. The exception to this is the 415 error. No framework can predict which content-types your application chooses to support and/or not support. Define outrospective.

This is up to you. PUT A PUT request is pretty much handled in the exact same way as a POST request. The big difference is that a POST request is supposed to let the server decide how to (and if at all) create a new resource. Historically (from the now obsolete RFC2616 it was to create a new resource as a 'subordinate' (child) of the URI where the request was sent to). A PUT request in contrast is supposed to 'deposit' a resource exactly at that URI, and with exactly that content. No more, no less. The idea is that the client is responsible to craft the complete resource before 'PUTting' it.

The server should accept it as-is on the given URL. As a consequence, a POST request is usually not used to replace an existing resource. A PUT request can do both create and replace. Side-Note There are also ' which can be used to send additional data to the remote, but they are so uncommon, that I won't go into too much detail here. But, for reference, here is an excerpt from the RFC: Aside from dot-segments in hierarchical paths, a path segment is considered opaque by the generic syntax.

URI producing applications often use the reserved characters allowed in a segment to delimit scheme-specific or dereference-handler-specific subcomponents. For example, the semicolon (';') and equals ('=') reserved characters are often used to delimit parameters and parameter values applicable to that segment. The comma (',') reserved character is often used for similar purposes. For example, one URI producer might use a segment such as 'name;v=1.1' to indicate a reference to version 1.1 of 'name', whereas another might use a segment such as 'name,1.1' to indicate the same. Parameter types may be defined by scheme-specific semantics, but in most cases the syntax of a parameter is specific to the implementation of the URIs dereferencing algorithm.

You cannot type it directly on the browser URL bar. You can see how POST data is sent on the Internet with for example. Some of the webservices require you to place request data and metadata separately.

For example a remote function may expect that the signed metadata string is included in a URI, while the data is posted in a HTTP-body. The POST request may semantically look like this: POST /?AuthId=YOURKEY&Action=WebServiceAction&Signature=rcLXfkPldrYm04 HTTP/1.1 Content-Type: text/tab-separated-values; charset=iso-8859-1 Content-Length: Host: webservices.domain.com Accept: text/html,application/xhtml+xml,application/xml;q=0.9,./.;q=0.8 Accept-Encoding: identity User-Agent: Mozilla/3.0 (compatible; Indy Library) name id John G12N Sarah J87M Bob N33Y This approach logically combines QueryString and Body-Post using a single Content-Type which is a 'parsing-instruction' for a web-server. Please note: HTTP/1.1 is wrapped with the #32 (space) on the left and with #10 (Line feed) on the right.